VXLAN and Unicast, Hybrid and Multicast mode with NSX - the IGMP querier explained

Hi all,

Introduction This is a topic that has been discussed on different platforms a lot, but I still had some difficulties understanding how reality works so I decided to do an own write-up based on a meeting that I had with Bal Birdy and Abdullah Abdullah (the only guy I know that has the same name twice).

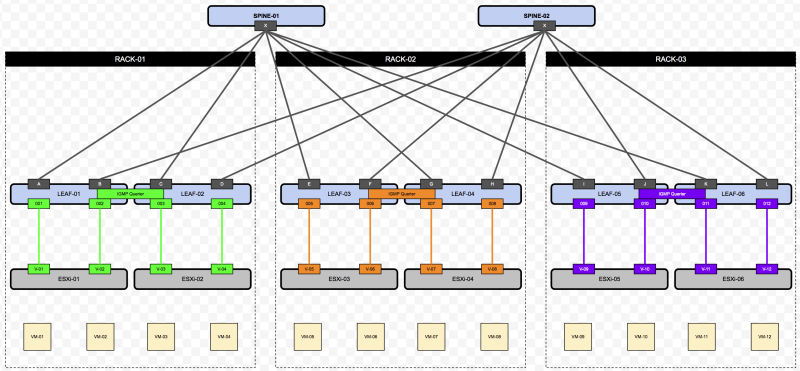

My main question was: “Why do we need to have an IGMP querier when we are setting the Hybrid as the replication modes for multi-destination VM traffic. The answer that the "NSX Reference Design Version 3.0” gives on page 41 is:

This reason or answer was too high level for me and I really wanted to understand the WHY more and also a bit of the HOW.

So here we go!

NSX and VXLAN

BUM

So lets first be clear on WHAT BUM traffic is and WHY this is traffic is generated. BUM stands for Broadcast, Unknown Unicast and Multicast. This is a frame that is sent out the network ONLY when the destination MAC address (of the destination VM where the source VM is trying to send traffic to) has no matching entry in its local ESXi host tables (VTEP, MAC, ARP Tables) and the NSX Controllers have not replied with the required information. The host will then flood that frame to all other hosts that are members of that VXLAN.

UNANSWERED QUESTION what if the destination VM is NOT in that same VXLAN? BUM will not be kicking in right? What will happen instead?

The way how BUM is sent around is different for each different replication mode.

Unicast

Physical Network Load

In this example, the VTEP interfaces of the hosts reside on the same L2 segment. The replication mode for multi-destination VM traffic is set to Unicast.

When the Unicast replication mode is used it does not use the physical network to process the BUM traffic. The actual replication is done on the host. The communication between the host VTEP's is strictly Unicast. Becasue the NSX Controllers are used there is a feature called ARP suppression that is used to restrict the amount of unicasts sent across the physical network between the VTEP interfaces of the hosts.

With the use of the Unicast replication mode, we need to take into consideration that more load is put on the physical interfaces of the ESXi hosts because of the replication overhead. There are some network cards that supports VXLAN optimization to improve the performance.

Using VXLAN offloading allows you to use TCP offloading mechanisms like TCP Segment Offload (TSO) and Checksum Segment Offload (CSO) because the pNIC is able to ‘look into’ encapsulated VXLAN packets. That results in lower CPU utilization and a possible performance gain. Source

RSS resolves the single-thread bottleneck by allowing the receive side network packets from a pNIC to be shared across multiple CPU cores. Source

More about maximizing the Performance in VXLAN overlay networks can be found here.

If we want to offload this fully from the host physical interfaces we need to use the Multicast replication mode. If we want to partly offload this from the host physical interfaces we need to use Hybrid mode.

The Physical Network (L2 and/or L3) is therefore not responsible for this type of BUM traffic.

Hybrid

Hybrid is a combination of Unicast and Multicast.

As mentioned before the Hybrid Replication mode offers some ESXi host physical NIC offloading. It does this by leveraging L2 Multicast on the physical network. Becasue the NSX controllers are still used the ARP supression feature can still be used.

QUESTION: So when is Unicast used and when is Multicast Used?

ANSWER: Multicast is used between VTEPs that are in the SAME L2 VLAN segment. Unicast is used between VTEPs that are in a DIFFERENT L3 segment.

When the VTEPs are in a DIFFERENT L3 segment the frames are typically forwarded to one (selected) host in that other L3 segment (MTEP) and this host will be responsible for the replication of the frames within that segment. It does this NOT trough Unicast, but also trough L2 Multicast again. So the frames are forwarded to the switch local to that ESXi host and from there the whole L2 Multicast process starts for that segment.

QUESTION: So why do we need to configured an IGMP querier (per VTEP VLAN) when we are using IGMP L2?

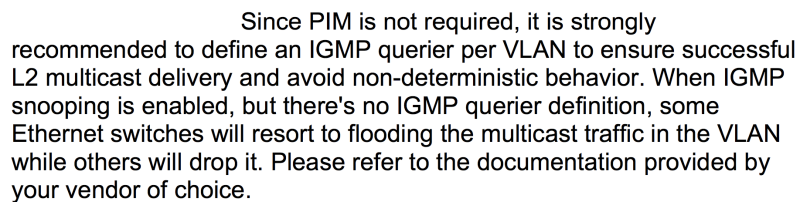

ANSWER: In order to answer this question I have (for simplicity purposes) created the following drawing to make things more clear. This topology is just an example to bring my point across and this is not a typical design that will be used in a real life production environment.

What you can see here are two switches that are connected with each other. ESX-01 and ESX-02 each have two VTEP interfaces. For simplicity purpose, I have made sure in the drawing that these interfaces land on the different physical switch interface.

When L2 Multicast is used, each VTEP interface (For example in ESXi-01 this is V-01 and V-02) sends an IGMP to join request to the Switch-01 for each Logical Switch (that is actually a multicast address). Let's assume we create one Logical Switch called “LOGICAL-SWITCH-01”. This Logical Switch will translate into a Multicast Group with the Multicast IP address 239.1.1.10. This means that when “VM-01" on the "ESXi-01" host that is part of this "LOGICAL-SWITCH-01" the host physical interface with a VTEP will send an IGMP-join message to the switch port “001” of “Switch-01”. This means that “Switch-01” will know to what ports it will need to forward BUM traffic for VMs on that specific “LOGICAL-SWITCH-01” (Multicast IP address 239.1.1.10). So the VTEP interfaces basically subscribe to the 239.1.1.10 address and the “Switch-01” keeps a LOCAL table to keep track (in a table that is locally significant) of what switch ports are subscribed for what multicast address. One interface can have multiple subscriptions for multiple Logical Switches.

So why do we need an IGMP querier then?

When "VM-05" on "ESXi-02” is also part of "LOGICAL-SWITCH-01” it does the same as “Switch-01”. It will create another “LOCAL” table that keeps track of the interface Multicast subscriptions. This information is not “shared” with “Switch-01”.

Now on “Switch-01” switch port “B” and “C” do not have an IGMP join subscription so it does not know that there are some hosts on the other switch that share the same interest for the "LOGICAL-SWITCH-01” as well and visa versa the “Switch-02” switch port D are not subscribed either.

Now, this is where the IGMP querier comes into play…

The IGMP querier is configured per VLAN to overcome this problem. In the drawing, you see where the querier is configured this is typically at a location where you can configure IP addresses.

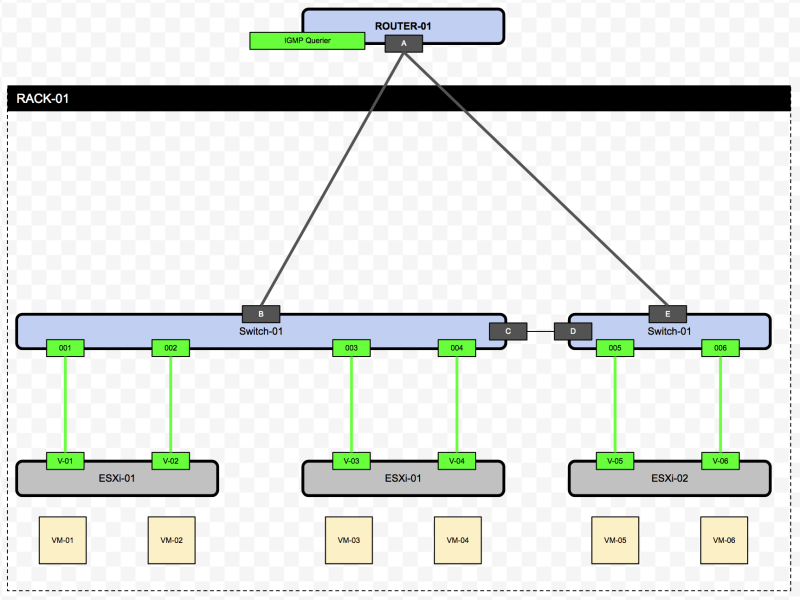

To extend the example a bit more below you will see an example of how this is used with two different VLANS.

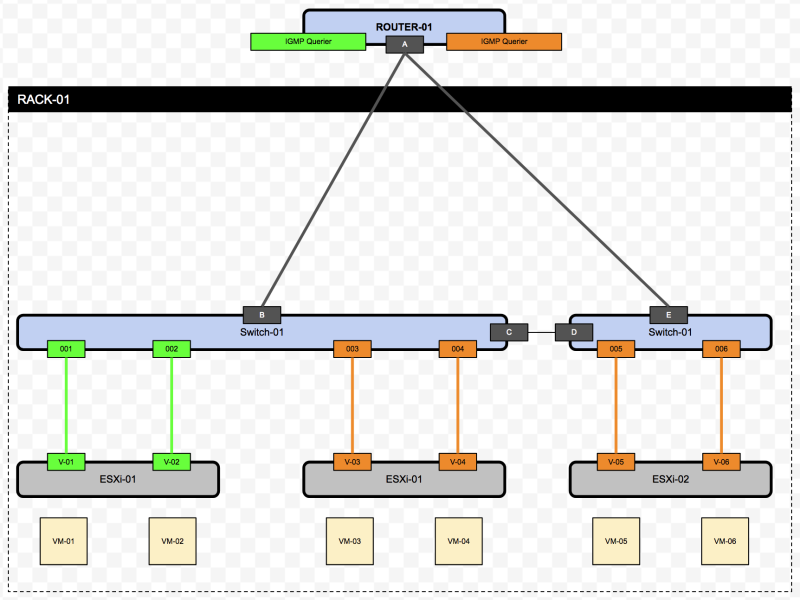

To elaborate a bit more on this I have also created the following drawing for a Leaf / Spine Architecture to put this a bit more into perspective.

Here we see that the VTEP VLAN and subnet is typically different across the racks. Rack 1 is in the GREEN VLAN / Subnet, Rack 2 in the ORANGE and Rack 3 is in the PURPLE VLAN / Subnet. So if we need to have an IGMP querier per VLAN we just need on querier p rack.

Multicast

Multicast does not use the controllers at all nor you need to configure the IGMP Querier. So within Multicast the controllers are also not required to be deployed and therefore the ARP suppression feature cannot be used. All ESXi hosts need to do is they need to join Multicast groups, and the physical network will take care of the rest.

For the Multicast Replication mode, however, the physical network needs to be configured for Multicast. This means L3 PIM routing needs to be configured end-to-end.

Thank you, Bal and Abdullah, for reviewing this article and for getting a better understanding of this.

Other sources:

- https://telecomoccasionally.wordpress.com/2015/01/11/nsx-for-vsphere-vxlan-control-plane-modes-explained/

- http://planetvm.net/blog/?p=2894

- http://wahlnetwork.com/2014/07/07/working-nsx-configuring-vxlan-vteps/

- https://www.vmware.com/content/dam/digitalmarketing/vmware/en/pdf/products/nsx/vmw-nsx-network-virtualization-design-guide.pdf

- Page 35 - 45